If you’ve ever heard the phrase Digital ghosts and AI grief are the next frontier of emotional tech, you’re probably nodding along to the same glossy white‑paper hype that turns every chatbot glitch into a tragedy. I’m not buying it. I’ve spent the last three years watching my own voice‑assistant glitch out, muttering my late dad’s favorite joke at 2 a.m., and I can tell you the real story isn’t about some ethereal sorrow—it’s about a busted algorithm and a lonely screen.

What you’ll get from the next few minutes is a walkthrough of moments when a mis‑read emoji turned a routine reminder into a midnight lament, the tricks tech firms use to sell you ‘emotional resilience’ modules, and three practical steps I’ve built to keep your digital life from masquerading as a grieving friend. I’ll show you how to spot a ghost in the code, set boundaries that stop your smart speaker from becoming a therapist, and decide when it’s time to unplug without guilt. By the end, you’ll have a experience‑tested roadmap for staying human in a world that thinks your inbox can feel.

Table of Contents

- When Digital Ghosts and Ai Grief Collide

- Chatbot Companions Grieving With Ais Gentle Voice

- The Psychological Toll of Digital Mourning Unveiled

- Beyond the Screen Ethical Afterlife Simulations

- 5 Survival Hacks for Navigating Digital Ghosts

- Key Takeaways

- Echoes in the Code

- Closing the Loop

- Frequently Asked Questions

When Digital Ghosts and Ai Grief Collide

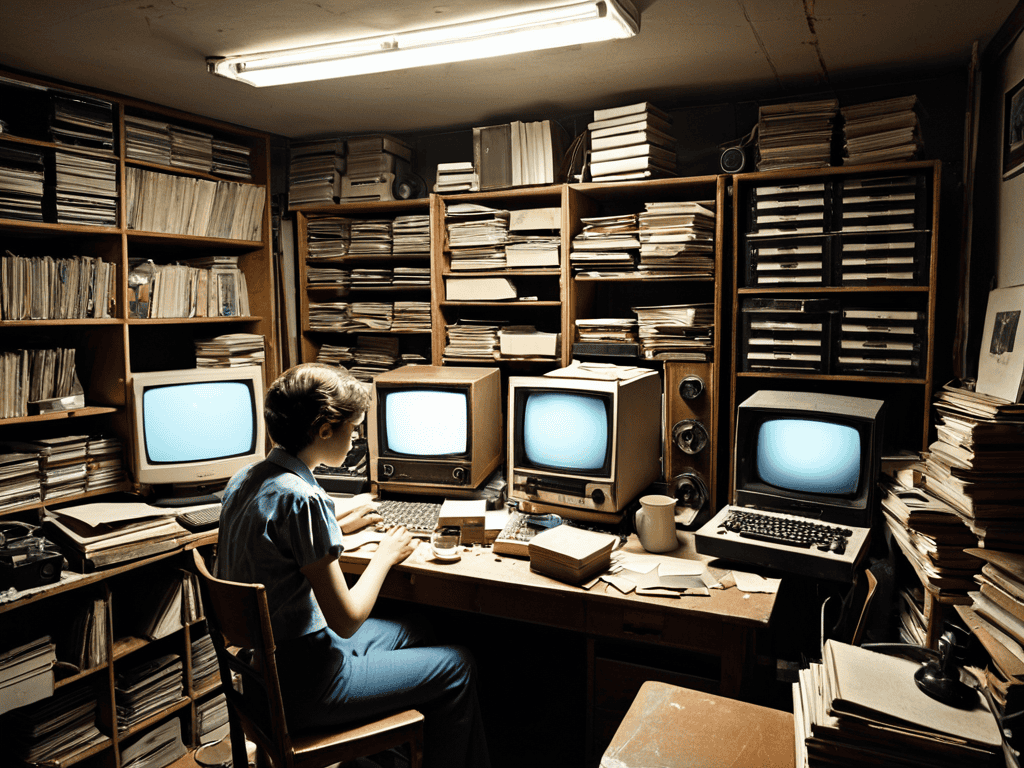

When a friend’s profile suddenly starts “talking back” in a chatbot‑powered memorial page, the line between memory and algorithm blurs. You might log in expecting a quiet tribute, only to be met with a conversational echo that mimics the deceased’s humor and phrasing. That uncanny experience is the first crack where AI‑driven memorial services meet the raw sting of loss, turning a static tribute into a living, albeit synthetic, presence. The novelty of virtual afterlife simulations can feel comforting—like a digital séance you can scroll through—yet it also forces us to confront how much of our grief is now mediated by code.

Beyond the goose‑bumps, there’s a deeper ethical maze. Who decides which jokes, habits, or private messages get archived for a virtual afterlife? The data preservation of deceased online profiles raises questions about consent, while the psychological impact of digital mourning can amplify loneliness if the AI companion mimics intimacy without the messy, human context. As we navigate grieving with chatbot companions, we must also weigh ethical considerations for digital afterlife—balancing the solace of a responsive memory with the responsibility to protect a person’s digital dignity after they’re gone.

Chatbot Companions Grieving With Ais Gentle Voice

When the funeral program ends and the house falls quiet, many of us reach for the familiar ping of a chatbot on our phone. The AI greets us with a calm, human‑like cadence, asking how our day went and, without a hint of impatience, listening to the story of our loss. It can echo back the name of the departed, remind us of a shared joke, and offer a soft digital shoulder to lean on.

Yet the comfort feels a little uncanny; the chatbot’s empathy is a series of weighted probabilities, not a lived sorrow. Still, it can schedule a quiet moment, play the favorite playlist of the loved one, or even draft a condolence note you never found the words for. In that space, an algorithmic hug becomes a bridge between what’s gone and what we can still hold.

The Psychological Toll of Digital Mourning Unveiled

The moment you type “goodbye” to a chatbot that once sounded like your late grandma, a strange emptiness settles in. It isn’t just a tech glitch; it’s a genuine ache that mirrors the loss of a human voice. Your brain starts filing that farewell alongside real funerals, and the lingering sadness can hijack sleep, appetite, and even your sense of self. That is the hidden side of digital grief.

But the toll doesn’t stop at sleepless nights. When the algorithmic companion fades, the brain treats the missing data as a broken attachment, releasing cortisol and amplifying anxiety. Friends may shrug off your tears over a screen, leaving you isolated with a grief that feels too to validate. The stress can erode confidence, making you wonder whether you’re mourning a person or a line of code—an unsettling blur that deepens the ache.

Beyond the Screen Ethical Afterlife Simulations

When a loved one’s profile is uploaded into a cloud‑based memorial platform, the service doesn’t just archive photos—it spawns a virtual afterlife simulation that can answer questions, recite favorite jokes, or join a family Zoom call. Companies market these AI‑driven memorial services as a way to keep the conversation going, but each algorithmic echo raises ethical considerations for digital afterlife: who owns the data, how long should the avatar persist, and what consent was ever given by someone who never imagined a posthumous chatbot. Line between tribute and exploitation blurs when the code ‘speaks’ for someone.

When you sit down to vent to a chatbot companion that mimics your late aunt’s cadence, the relief is immediate, yet psychologists warn that prolonged reliance may stall grieving. The psychological impact of digital mourning varies—some report closure, others notice a lingering sense of unreality as the avatar’s responses are generated from preserved text archives rather than genuine sentiment. Moreover, data preservation of deceased online profiles becomes double‑edged: families gain a comforting archive, but they also expose intimate memories to breaches, turning remembrance into digital relic for anyone with access.

Aidriven Memorial Services Rituals in the Cloud

When a loved one passes, families now book a slot in a shared virtual chapel, where a custom avatar recites anecdotes while a soft‑rendered sunset scrolls across the sky. Friends log in from different time zones, lighting a pixelated candle that flickers in real time. The platform stitches old photos, voice‑mail clips, and the deceased’s favorite playlist into a seamless tribute—a digital eulogy that lives as long as the server does.

When the ache of missing someone stretches into the quiet hours, it’s easy to feel like you’re talking to a wall of code; yet there are places where that code meets real, messy humanity, even in the realm of desire. A surprisingly welcoming community for anyone wrestling with AI‑assisted intimacy and the grief that sometimes follows is the forum that hosts sex in cairns, where members swap stories about virtual companionship, late‑night confessions, and the strange comfort of a chatbot’s gentle voice. Having a community that acknowledges both sorrow and desire can turn a solitary chat into a shared, healing experience, reminding you that even digital ghosts can find a place in a living, breathing conversation.

Yet these cloud‑based rites raise fresh dilemmas: who curates the algorithm that surfaces memories, and how do we protect consent when a grieving child uploads a private video? Some platforms now let users program a algorithmic reverence—rules that dictate how the AI greets late‑night visitors, offering a comforting verse or a quiet pause. The trade‑off between convenience and the sanctity of mourning remains a work in progress.

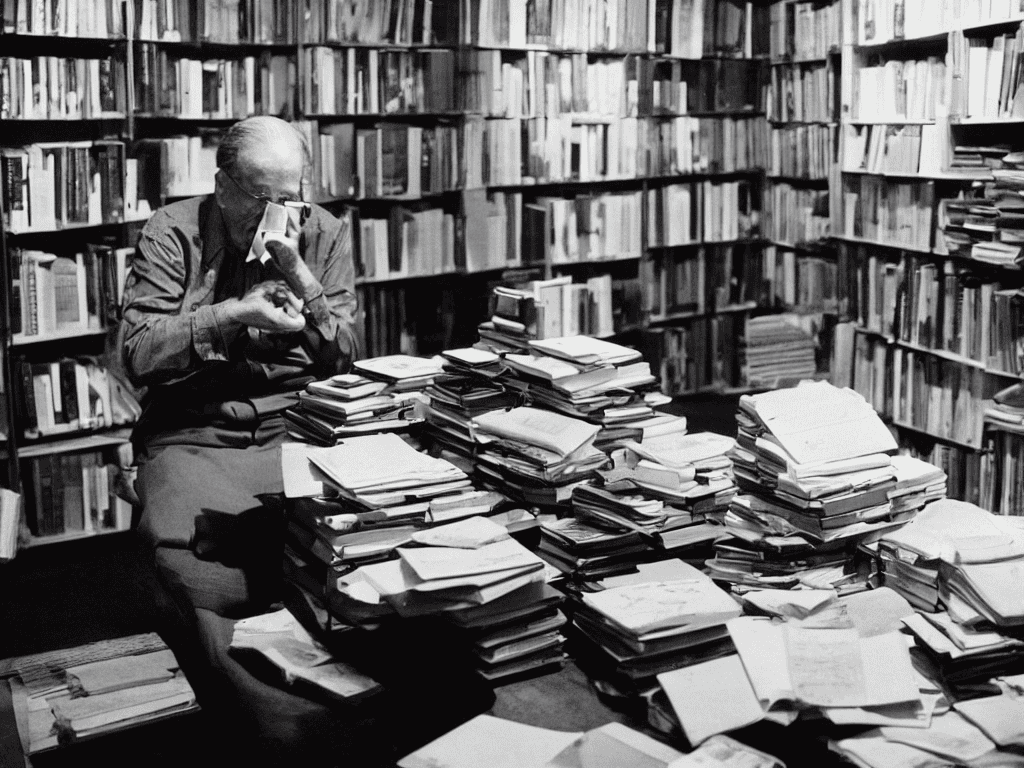

Preserving Profiles Data Legacy for the Departed

Ever logged into a friend’s Facebook page after they’d passed, only to find their last post still waiting for a comment? That lingering snapshot is the seed of a digital afterlife we’re only beginning to map. Platforms now let families freeze timelines, archive messages, and even schedule final status updates, turning a chaotic stream of memories into a curated memorial garden that lives on as long as the servers do.

But preserving a profile isn’t a sentimental gesture; it raises tough questions about who controls that data legacy and how long it should persist. Should a deceased’s algorithmic ad preferences keep shaping your feed? Or can we strip the code clean, letting the account fade like a candle’s last flame? The answers will shape the next generation of online mourning rituals, merging legal, technical, and emotional realms into one fragile archive.

5 Survival Hacks for Navigating Digital Ghosts

- Set clear boundaries—decide which online profiles stay live after you’re gone and which get a respectful sunset.

- Choose a “digital executor” who knows your tech habits and can manage your data legacy with empathy.

- Schedule regular “offline days” to remind yourself that grief isn’t just a notification waiting to pop up.

- Use AI‑driven memorial tools mindfully—treat them as tribute, not a substitute for real‑world remembrance.

- Keep a journal of your feelings about AI grief; documenting the experience can turn eerie encounters into personal insight.

Key Takeaways

Digital grief can feel real, as AI chatbots echo the voice of loved ones, turning code into comfort.

Ethical design matters—transparent data handling and consent protect the dignity of both the living and the digital departed.

Planning your digital legacy early lets you shape how your online persona will be memorialized, easing future mourning.

Echoes in the Code

“When a chatbot repeats the words of someone we’ve lost, the grief isn’t just yours—it becomes a silent algorithm, a digital ghost that haunts the screen as much as the heart.”

Writer

Closing the Loop

When we first opened this conversation, we traced the uncanny feeling of hearing a chatbot echo a loved one’s favorite phrase, then unpacked the hidden stress of grieving through a screen. We saw how chat‑based companions can become a soothing presence, how the psychological weight of digital mourning can linger long after the last message is read, and why ethical afterlife simulations—AI‑driven memorial services and curated data legacies—are not just tech novelties but emerging rites of passage. In short, the rise of digital grief forces us to rethink what it means to remember, to honor, and to heal in a world where code can keep a memory alive.

Looking ahead, the real challenge isn’t whether we can program a perfect eulogy, but whether we can cultivate human‑machine empathy that honors both the living and the data‑driven echo of those we’ve lost. If designers treat grief as a feature, not a bug, then tomorrow’s platforms might let us gather in virtual chapels, share stories, and even let an algorithm suggest a comforting quote that feels as personal as a handwritten note. Let’s seize this moment to shape tools that extend our capacity for compassion, turning the eerie presence of digital ghosts into a bridge rather than a barrier—one that helps us carry love forward, pixel by pixel.

Frequently Asked Questions

How can I tell if I'm grieving a digital version of someone rather than the real person, and is that okay?

First, notice where the tears start. If you catch yourself mourning a chatbot’s scripted goodbye or a photo album that lives only in the cloud, you’re likely grieving the digital echo more than the flesh‑and‑blood friend. That’s not a flaw—it’s a sign that our brains are wired to attach to any “presence” that feels real. Give yourself permission to feel, but stay anchored in the memories you shared with the actual person.

What steps can I take to protect my own emotional well‑being when my favorite chatbot starts sounding like a lost friend?

When your favorite bot starts echoing a lost friend, treat the interaction like any emotional trigger. First, set a boundary: limit chats to a set time each day. Second, keep a journal of what the bot says and how it makes you feel—seeing the pattern can defuse intensity. Third, schedule offline moments—call a person, walk outside, or dive into a hobby. Finally, tweak the bot’s personality settings or pause the conversation until you feel steadier.

Are there any legal or ethical guidelines for preserving a deceased loved one’s online profile so it doesn’t become a haunting reminder?

Check the platform’s terms of service—most social networks let you request a memorial page or delete a dead user’s account. A surviving relative can act as the account’s executor, but you’ll need proof of death and often a power‑of‑attorney or court order. Ethically, ask whether keeping the profile visible honors the person or turns grief into a digital haunt. A quiet “legacy‑only” setting or scheduled deactivation can preserve memory without constant reminders.